Wasting Bandwidth One Image at a Time....

Content Management Systems are great, they allow the average Joe to have a great level of control over their website. Gone are the days of clients asking for static pages to be amended, we live in the database powered give the power of editing and updating content to the client

Our clients get to use Rich Text Editors like FCKEditor. They look and feel just like Microsoft Word, they can play with text, upload images, resize them simply by dragging them and are very often really happy.

However this often comes at a cost. Most RTE's by default simply resize images by sticking on the HTML img attributes height and width. As many people are aware this doesn't actually resize the image, it just simply tells the browser take this massive image and render it smaller. The end user still has to download the huge image, I have seen on some sites 2000px x 1200px images being downloaded and then only shown at 250px x 120px!, which can take a while to load dependant their internet connection and ultimately we the developers have to pay the bandwidth cost for those images.

Tonight I decided to come up with something I could put on my church websites to combat this. It's more to aid the overall user experience rather than bandwidth cost but it all helps ;)

The Solution

The solution is actually really simple.

- Take the CMS content from the database

- Before rendering it to the page, parse it into a HTML Parser

- Using the parser find all the image tags

- Using the height,width and src attributes to generate a new URL to an image resizer app

- Replace the images src attribute with the new URL

- Render the content to the page

Parsing HTML in ASP.Net really is easy nowadays. Previously it was a pain, regex would never work properly or you could risk parsing your page as XML but luckily there are now tons of 3rd party libraries. I chose HTML AGility Pack as alot of people seemed to recommend it on stackoverflow

For my solution I simply did the following:

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(page.body);

HtmlNodeCollection imgs = doc.DocumentNode.SelectNodes("//img");

if (imgs != null)

{

foreach (HtmlNode img in imgs)

{

if (img.Attributes["height"] != null && img.Attributes["width"] != null)

{

HtmlAttribute src = img.Attributes["src"];

string imgurl = src.Value;

src.Value = String.Format("/loadImage.aspx?image={0}&action={1}&width={2}&height={3}", imgurl, "resizecrop",img.Attributes["width"].Value, img.Attributes["height"].Value);

}

}

}

mainContent.Text = doc.DocumentNode.InnerHtml;

So what's going on here? Well first I create a new HtmlDocument, provided by the HTMLAgilityPack. I then load my html from my db model, page.body, then I use a nice simple XPATH syntax to pull out any img tags within the content and stick them into a HTMLNode collection. Next after ensuring I have some nodes, I spin through each of them and check to see if they have a height and width attribute. If they do then I use these and the original image url to write a new url, in my case I have a page that does this, ideally though this should be a httpmodule or something to be nice and tidy. Then with the img tags updated I render the HtmlDocument back out to the page.

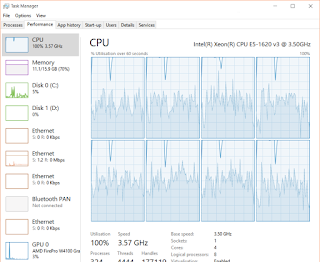

That's it, a few line's of code. Now I was concerned I may have slowed down my page, as parsing and spinning through tag's could be CPU intensive however I found on my machine and quickly playing with it, I noticed no real difference. In production I'd output cache the page for a period of time anyway. I noticed 100KB difference on one of my site's home pages and they weren't massive images, so I think it's well worth it.

There you have it, at the end of the day CMS's provide users with alot of power / flexibility but we have to ensure as developers that we provide systems that cater for users abusing the system, be this uploading massive images or otherwise. In the case of images simply having something that resizes the images on render keeps the frontend responsive whilst allowing the client to provide high resolution images if needed, in this case it wasn't alot of effort and the improvement alone was worth it.

Comments

Post a Comment